Book Review: Superforecasting

Today’s book review is Superforecasting: The art and science of Prediction, by Philip E Tetlock and Dan Gardner.

When I explain my work as an actuary to people not involved in finance, I explain that I work out how much money the insurance company needs to set aside from the premiums it receives to make sure it can pay all the claims that come in. In other words, I need to make some kind of prediction of the future.

So when I came across this book, I really had to read it.

That said, the kind of forecasting it is generally talking about is more general than financial forecasting. Some of the questions we encounter in the first chapter include:

- Will North Korea detonate a nuclear device before the end of this year?

- Will Russia officially annex additional Ukrainian territory in the next three months?

- How many additional countries will report cases of the Ebola virus in the next eight months?

Actually that last one is probably quite relevant to actuaries, at least those working for travel insurers or life insurers. So is there a way to forecast the answers to questions like these? And how do you get better at it?

Back in the 1980s, Tetlock started a study of expert forecasters to try and work out what made a good forecaster. Because he was looking at very long-term forecasts, he didn’t publish the final results until 2005. He found two statistically distinguishable groups of expert. One group generally did as well as, or (in long-term forecasts) worse than random guessing. The other group consistently did slightly better than random chance. Tetlock and Gardner categorise these groups as foxes and hedgehogs, using the categorisation of the ancient greek philosopher Archilochus:

The fox knows many things but the hedgehog knows one big thing.

When forecasting, foxes beat hedgehogs. Hedgehogs tended to have one big idea – one narrative about how things work (some were socialists, others were free market fundamentalists, and still others were environmental doomsayers). They tended to ignore information that didn’t fit their narrative, and they generally did worse than random chance. Foxes, on the other hand, were more pragmatic experts who drew on many analytical tools, and gathered as much information from as many sources as they could. They were also much more likely to change their view when new information appeared.

Foxes beat hedgehogs. And the foxes didn’t just win by acting like chickens, playing it safe with 60% and 70% forecasts were hedgehogs boldly went with 90% and 100%. Foxes beat hedgehogs on both calibration and resolution. Foxes had real foresight. Hedgehogs didn’t.

But being right less often doesn’t harm the career of the hedgehog. The study revealed an inverse correlation between fame and accuracy:

.the more famous an expert was, the less accurate he was… Animated by a Big Idea, hedgehogs tell tight, simple, clear stories that grab and hold audiences…Foxes don’t fare so well in the media. They’re less confident, less likely to say something is “certain” or “impossible”, and are likelier to settle on shades of “maybe”.

So to be a superforecaster, you need to let go of the Big Idea. But what else do you need? There are quite a few key attributes, according to the authors:

- Unpack the question – sometimes called Fermi estimation. Whatif gives a great explanation. This involves unpacking the question into many different subcomponents. The example in the book is thinking about the question “will polonium be found in Yasser Arafat’s body“. To answer that question, we don’t need to get into motive, or who would poison Yasser Arafat. Instead, we need to understand how polonium works, and whether it was even possible. Then we need to think about the many different ways that polonium could have got into his body (including intentional contamination). Breaking down the problem into component parts helps you think through the possibilities.

- Leave no assumptions unscrutinised – you need to think about all the assumptions you’ve made while unpacking the problem – for example, in the example above, one of the assumptions you might make without noticing is that polonium could only be found if Yasser Arafat had been deliberately poisoned. But interference was also possible.

- Dragonfly Eye – in other words the ability to obtain many different perspectives on the problem. Another way of describing this is active open-mindedness. Superforecasters are constantly testing their perspectives, and looking for ways to think differently about a problem.

For superforecasters, beliefs are hypotheses to be tested, not treasures to be guarded.

- Adopt the outside view – you need to consider the base probability of something occurring. For example, if you are asked how likely it is that there will be an armed clash between China and Vietnam, you should start by asking how often such a clash generally happens (rather than immediately jumping into the current political state of relations). If no clash has happened in the last 100 years, then next year is a lot less likely than it might be if a clash happens every five years on average.

- A way with numbers – most superforecasters have a way with numbers. They aren’t necessarily super mathematicians (one of the early examples in the book dropped out of his PhD in mathematics after “I had my nose rubbed in my limitations”) but they are highly numerate, with backgrounds in maths, or computer programming, or some kind of science. The PhD maths drop out regularly builds Monte Carlo simulation models, for example.

- Find the wisdom of crowds – superforecasters spend a lot of time looking for other sources of information about the problem they are trying to solve. And working in teams of forecasters, if you develop ways of capturing everybody’s views, will also improve accuracy.

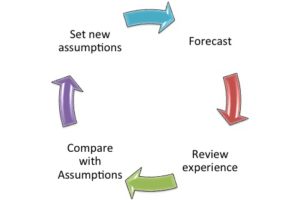

Actuaries are trained to think about the control cycle, when predicting the financial future, which they do for pricing, for reserving, and in looking at the sources of variation in experience. That very much involves forecast and review. For the most important sets of assumptions (such as future claim rates on insurance contracts), there is a lot to think about in this book. I’m particularly taken with the many different ways in which superforecasters think widely about their forecasts.

Actuaries are trained to think about the control cycle, when predicting the financial future, which they do for pricing, for reserving, and in looking at the sources of variation in experience. That very much involves forecast and review. For the most important sets of assumptions (such as future claim rates on insurance contracts), there is a lot to think about in this book. I’m particularly taken with the many different ways in which superforecasters think widely about their forecasts.

This weekend we are in the middle of another east coast low, with more rain forecast on already sodden catchments recovering from floods and storms up and down the east coast of Australia. Our weather forecasters are getting better and better at predicting events like this. For insurers, the more we can take those forecasts and use them to help our customers (with predictive text messaging, and repairers and claims staff on standby), the better. Superforecasting has many uses, not just political or financial. It is definitely worth reading this book to think more broadly about how to forecast better.

See the original article at the Actuarial Eye.

CPD: Actuaries Institute Members can claim two CPD points for every hour of reading articles on Actuaries Digital.